In the high stakes world of industrial operations, the "post-mortem" has long been our most reliable teacher. We have become experts at forensic analysis, mapping out exactly how we failed.

Despite this forensic prowess, global workplace fatality rates have plateaued. We are getting better at explaining accidents, but not at preventing them.

Thus, we need to shift our focus from investigating the past to managing the present in real-time. We are moving into the era of the "pre-mortem." Through this, we can use AI to imagine a future failure so we can dismantle the causes today.

But achieving this shift requires more than just purchasing the latest technology.

It demands a clear-eyed understanding that AI is not a magic wand. Its performance depends entirely on two things: the quality of its fuel and the wisdom of its operator. The real drivers of AI in HSE are contextual data and human experience.

Current realities

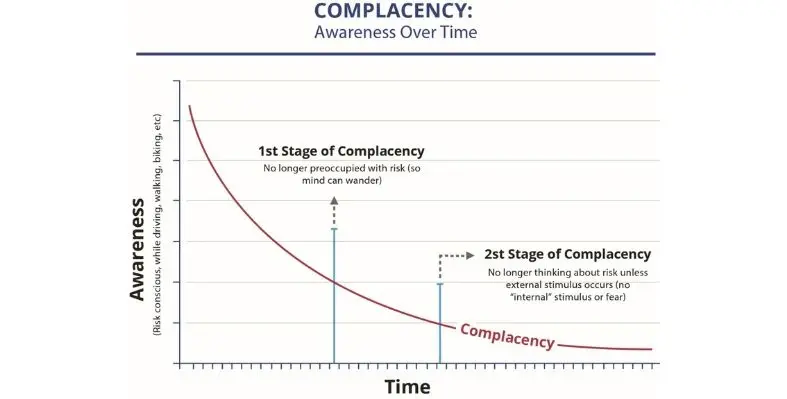

Right now, most safety programmes rely on periodic audits, morning inspections, or static risk assessments. We assume our controls are working because they were checked last Tuesday.

But the reality on the ground is fluid. A physical guard might be removed ten minutes after an inspection; a sensor might drift; a crew might be exhausted by mid-afternoon.

This lag time between a control failing and us finding out about it is the "grey zone" where incidents are born. Currently, our risk management is often a document sitting in a drawer while the environment it describes changes every hour. We are essentially managing safety in the rearview mirror, waiting for a deviation to become a disaster before we react.

Why data is the driver

AI is a high-performance engine, and engines need fuel. That fuel is contextual data.

Generic industry statistics won't help you catch a "weak signal" in your specific plant. To be effective, AI needs the messy, internal details of how your site runs, how your machinery behaves in your local humidity, and the specific language your teams use in their reports. However, we must be realistic: building this data foundation is a marathon, not a sprint. The challenges of cleaning fragmented records and accurately labeling historical data are significant. It requires a long-term commitment to digital systems that collect and collate information consistently before the AI can even begin to "learn."

Non-negotiable human homework

Furthermore, you cannot deploy AI to monitor controls if you haven't done the human homework first. A comprehensive Risk Assessment (RA), driven by the collective intelligence of people who have "been there and done that," is the mandatory starting point. Humans must define the controls and classify them. This is the only way to tell the AI what "good" actually looks like. This human wisdom is not a step we can skip. It is the bedrock upon which any effective AI system must be built.

Unlike a human inspector who assesses a control at a single point in time, an AI system continuously evaluates four critical dimensions of control health.

Presence: Is the shield actually there and active right now?

A control that is approved on paper is useless if it is not physically in place. AI evaluates presence by aggregating data from multiple sources. For example, a machine guard fitted with a limit switch sends a continuous signal to the AI platform; if the guard is removed, the signal stops, and the AI instantly flags a failure. Cameras equipped with computer vision can visually verify that required equipment is in place, such as confirming a ventilation fan is positioned at a confined space entry before a permit is finalised. In a refinery, a pressure relief valve is monitored not just by its own signal, but by confirming its isolation valves are in the correct open position, ensuring the relief path is always available.

Suitability: Is this still the right control given today's specific variables?

A control might be present, but that doesn't mean it is the right tool for the job at this moment. AI evaluates suitability by pulling in contextual data from weather APIs, maintenance schedules, and production plans. Consider a planned hot work activity requiring a fire watch. The AI checks the weather forecast and sees wind speeds are predicted to gust above the limit specified in the company's procedure. It determines that the standard "fire watch with one extinguisher" control is no longer suitable and automatically recommends upgrading the control or postponing the work.

Effectiveness: Is it performing to its design specification at this very second?

A control can be present and suitable, but is it actually working? AI compares live output against engineered design parameters. For example, a local exhaust ventilation system for welding fumes was designed to maintain a specific airflow velocity. The AI, connected to a pressure sensor in the ducting, notices the airflow has dropped below the design spec. It hasn't failed entirely, but it is no longer performing effectively. The AI alerts the maintenance team to a partial blockage or fan degradation, allowing them to restore full protection before worker exposure increases.

Reliability: Based on historical patterns and current degradation, when is this control likely to fail?

This is the most predictive dimension. AI uses machine learning to analyse historical maintenance records and real-time sensor data, mapping the current state of equipment onto a predictive degradation curve. For a critical crane, the AI might learn that load cells in the overload system begin to drift hundreds of hours before they fail completely. When it detects minor drift in the live data, it cross-references the historical model and issues an alert: "Overload protection system showing early signs of degradation. Recommend inspection within 14 days to prevent unplanned failure."

The real power lies in AI's ability to act as a scenario generator. Humans are naturally limited in how many variables we can correlate at once. We might see a vibrating pump, a delayed maintenance schedule, and a tired crew as three separate, manageable issues. AI, however, doesn't see them in isolation.

Consider this scenario: In a chemical processing plant, an operator notes a slight, 2% variance in a reactor's temperature. It is well within limits, so it is logged and forgotten. Meanwhile, the maintenance system flags a pump's vibration is up 5%, and the shift roster shows the relief operator called in sick, meaning the current crew is working its fourth double-shift in a week.

This may be a non-issue for humans, but the AI synthesises these weak signals. Its scenario generation models a "path to failure." Fatigued operators are slower to notice the creeping temperature variance, which is being exacerbated by the degrading pump. It issues an alert: "High probability of temperature excursion in Unit 3 within the next 4 hours. Recommended action: Re-assign operator for break and inspect pump."

Guarding the human element

Despite this potential, we must design these systems carefully. I am not a fan of the "point-and-click" safety approach where you take a photo and an app finds the hazard. Safety isn't just about spotting a trip hazard; it's about the thought process behind it. When we offload hazard identification entirely to an app, we stop thinking. We stop engaging our brains.

This is precisely why a good safety programme needs to start with the human-led Risk Assessment as the basis. We are not using AI to find a box left in an aisle. We are using it to monitor the complex interplay of controls designed by humans. This focus on control integrity rather than personal compliance is the key to avoiding the "Big Brother" trap.

When the system is designed to ask, "Is the guard in place?" rather than "Is Steve wearing his gloves?", it shifts the culture. The workforce sees the AI as a silent guardian of their equipment's health and their own safety and not a warden watching their every move.

This article was penned by JC Sekar, co-founder and CEO of AcuiZen Technologies, a Singapore-based company specialising in the digital transformation of operational workflows to enhance risk management, safety, operational efficiency and sustainability. HSE Review has edited the article for brevity.